StyleGAN: Difference between revisions

| Line 12: | Line 12: | ||

===Mapping Network=== | ===Mapping Network=== | ||

The mapping network <math>f</math> consists of 8 fully connected layers with leaky relu activations at each layer. | The goal of the mapping network is to generate plausible "styles" in the form of a latent vector <math>w</math>.<br> | ||

This style <math>w</math> is used by the synthesis network as input the each AdaIn block.<br> | |||

The mapping network <math>f</math> consists of 8 fully connected layers with leaky relu activations at each layer.<br> | |||

The input and output of this vector is an array of size 512.<br> | |||

===Synthesis Network=== | ===Synthesis Network=== | ||

Revision as of 19:15, 4 March 2020

StyleGAN CVPR 2019

2018 Paper (arxiv)

CVPR 2019 Open Access

StyleGAN Github

StyleGAN2 Paper

StyleGAN2 Github

An architecture by Nvidia which allows controlling the "style" of the GAN output by applying adaptive instance normalization at different layers of the network.

StyleGAN2 improves upon this by...

Architecture

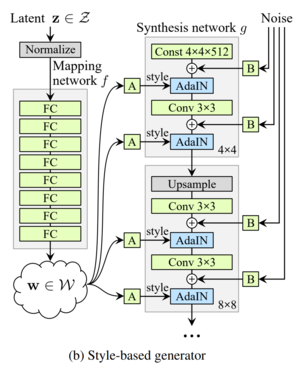

StyleGAN consists of a mapping network \(\displaystyle f\) and a synthesis network \(\displaystyle g\).

Mapping Network

The goal of the mapping network is to generate plausible "styles" in the form of a latent vector \(\displaystyle w\).

This style \(\displaystyle w\) is used by the synthesis network as input the each AdaIn block.

The mapping network \(\displaystyle f\) consists of 8 fully connected layers with leaky relu activations at each layer.

The input and output of this vector is an array of size 512.

Synthesis Network

The synthesis network is based on progressive growing (ProGAN).

It consists of 9 convolution blocks, one for each resolution from \(\displaystyle 4^2\) to \(\displaystyle 1024^2\).

Each block consists of upsample, 3x3 convolution, AdaIN, 3x3 convolution, AdaIN.

After each convolution layer, a gaussian noise with learned variance is added to the feature maps.