Transformer (machine learning model): Difference between revisions

No edit summary |

|||

| Line 11: | Line 11: | ||

===Attention=== | ===Attention=== | ||

Attention is the main contribution of the transformer architecture.<br> | Attention is the main contribution of the transformer architecture.<br> | ||

[[File:Transformer attention.png|500px]] | [[File:Transformer attention.png|500px]]<br> | ||

The attention block outputs a weighted average of values in a dictionary of key-value pairs.<br> | The attention block outputs a weighted average of values in a dictionary of key-value pairs.<br> | ||

In the image above:<br> | In the image above:<br> | ||

| Line 19: | Line 19: | ||

The attention block can be represented as the following equation: | The attention block can be represented as the following equation: | ||

* <math>\operatorname{SoftMax}(\frac{QK^T}{\sqrt{d_k}})V</math> | * <math>\operatorname{SoftMax}(\frac{QK^T}{\sqrt{d_k}})V</math> | ||

===Encoder=== | ===Encoder=== | ||

The receives as input the input embedding added to a positional encoding.<br> | The receives as input the input embedding added to a positional encoding.<br> | ||

Revision as of 19:33, 5 December 2019

Attention is all you need paper

A neural network architecture by Google.

It is currently the best at NLP tasks and has mostly replaced RNNs for these tasks.

Architecture

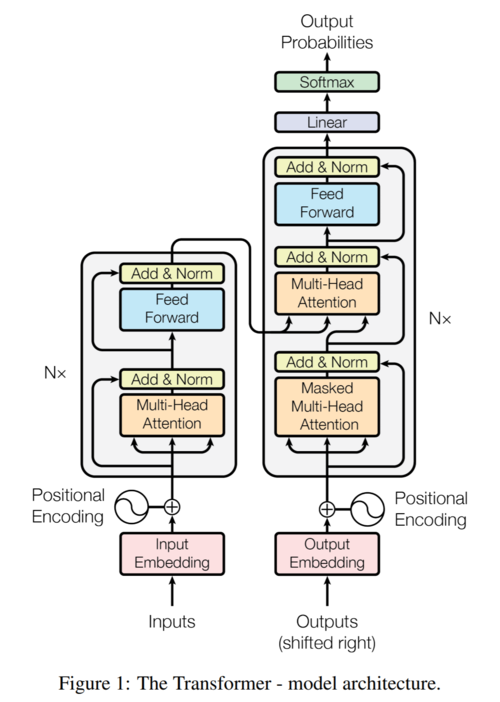

The Transformer uses an encoder-decoder architecture.

Both the encoder and decoder are comprised of multiple identical layers which have

attention and feedforward sublayers.

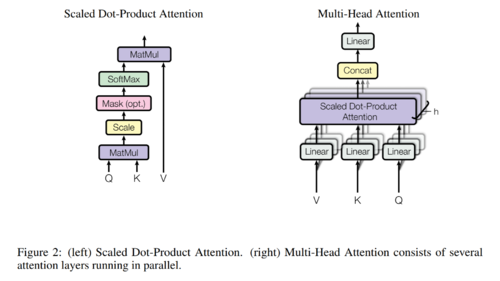

Attention

Attention is the main contribution of the transformer architecture.

The attention block outputs a weighted average of values in a dictionary of key-value pairs.

In the image above:

- \(\displaystyle Q\) represents queries (each query is a vector)

- \(\displaystyle K\) represents keys

- \(\displaystyle V\) represents values

The attention block can be represented as the following equation:

- \(\displaystyle \operatorname{SoftMax}(\frac{QK^T}{\sqrt{d_k}})V\)

Encoder

The receives as input the input embedding added to a positional encoding.

The encoder is comprised of N=6 layers, each with 2 sublayers.

Each layer contains a multi-headed attention sublayer followed by a feed-forward sublayer.

Both sublayers are residual blocks.

Decoder

Resources

- Guides and explanations